| Task | Best Approach | Why | Risk |

|---|---|---|---|

| Choose a model for production coding | Claude Opus 4.7 (87.6% SWE-bench Verified, independently confirmed) | Strongest publicly verified coding benchmark position | GPT-5.5 claims a higher score but it is vendor-reported and unverified on the primary leaderboard |

| Choose a model for scientific reasoning | Gemini 3.1 Pro (94.3% GPQA Diamond, 44.7% HLE) | Top scores on the hardest reasoning benchmarks per independent aggregators | Top-three models score within margin of error; differences may not be meaningful in practice |

| Minimize cost at scale | DeepSeek V4 Pro or Flash (MIT license, 1/7th cost of Claude Opus 4.7) | Near-frontier performance at dramatically lower cost | Falls behind closed frontier models on the hardest reasoning and agentic benchmarks |

| Option | When to Use | Strength | Limitation |

|---|---|---|---|

| Claude Opus 4.7 | Coding, agentic workflows, low-hallucination requirements | Strongest verified coding scores; lowest reported hallucination rate | Expensive; BrowseComp dropped from 4.6 to 4.7 |

| GPT-5.5 | Computer use, terminal automation, single-tool-does-everything workflows | Tops composite intelligence index; native OpenAI tool stack integration | Key coding lead claim is vendor-reported and unverified |

| Gemini 3.1 Pro | Scientific reasoning, multimodal, large-document analysis | Best price-to-capability ratio of frontier closed models; 1M-2M token context | Not frontier-leading on agentic coding or computer use |

| DeepSeek V4 | Cost-sensitive workloads at scale; data sovereignty via self-hosting | MIT license; competitive on coding and math at fraction of cost | One generation behind on hardest reasoning benchmarks per DeepSeek's own assessment |

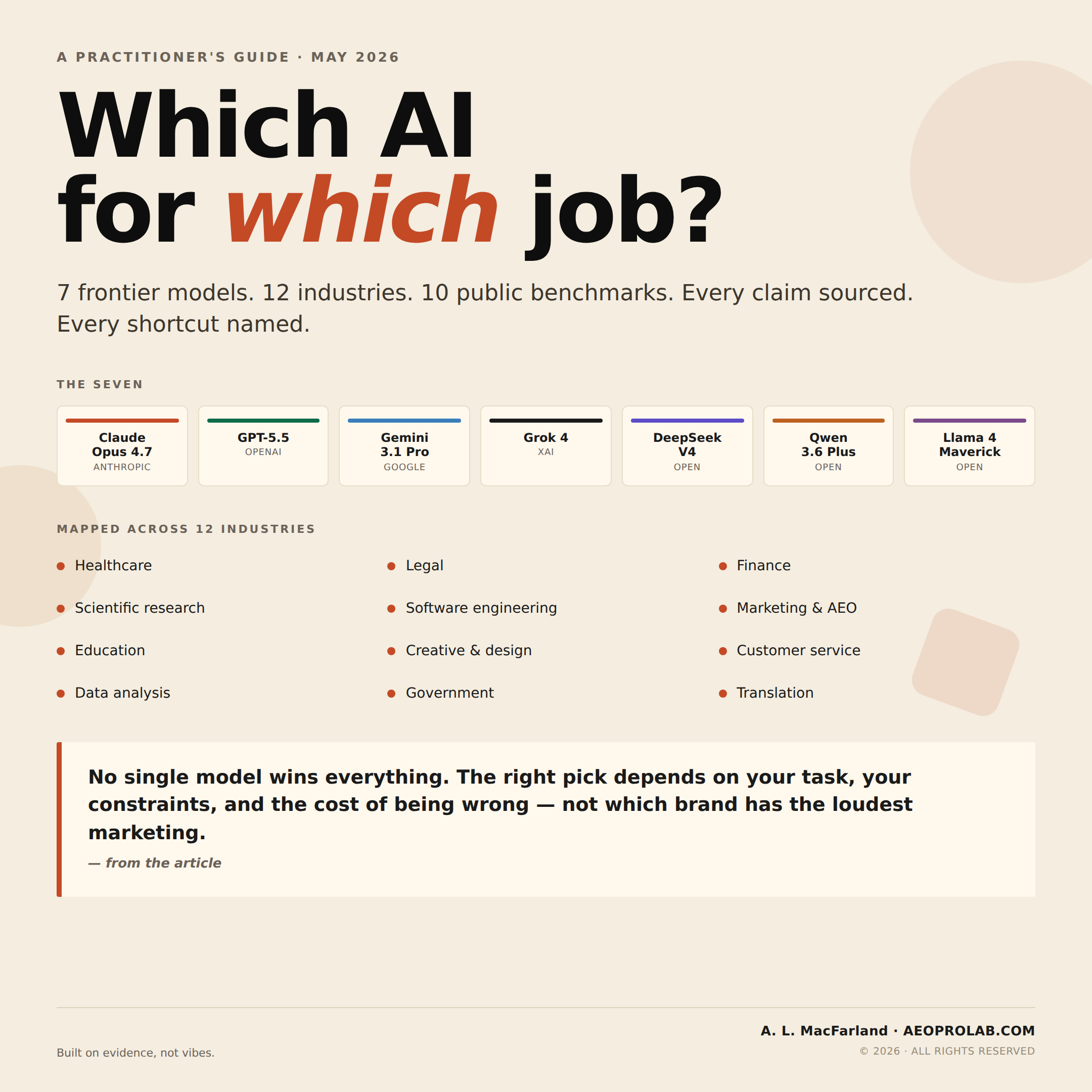

TL;DR: Best AI Model by Use Case (May 2026)

There is no single "best" AI model. As of May 2026, the best-supported current fits per public evidence are:

- Coding and software engineering: Claude Opus 4.7 is the strongest publicly supported coding fit (87.6% on SWE-bench Verified per the official leaderboard; OpenAI reports a higher GPT-5.5 score in release notes that has not been independently verified)

- Scientific reasoning and PhD-level science: Gemini 3.1 Pro is the best-supported current fit (94.3% on GPQA Diamond, 44.7% on HLE per Artificial Analysis; top-three scores within margin of error)

- Computer use and terminal automation: GPT-5.5 (78.7% OSWorld-Verified)

- Long-context document analysis: Gemini 3.1 Pro (1M-2M token window)

- Cost-sensitive workloads at scale: DeepSeek V4 Pro or Flash (open weight, 1/7th the cost of Claude Opus 4.7)

- Chinese and Asian language work: Qwen 3.6 Plus (Apache 2.0, leading open-weight multilingual model)

- Largest open-weight context window: Llama 4 Scout (10M tokens)

The right model for your work depends on the cost of being wrong, your data sovereignty constraints, and your task category. This guide breaks each down with public benchmark sources.

Evidence note: This guide separates independently visible leaderboard results from vendor-reported claims, aggregator snapshots, and practitioner judgment. Treat exact benchmark scores as time-sensitive. Treat the selection framework as the durable part.

Quick reference: Best-supported AI model fit by industry

| Industry | Best-supported current fit | Why | |---|---|---| | Healthcare and medicine | Claude Opus 4.7 | Reported by Artificial Analysis as having one of the lowest hallucination rates among frontier models | | Legal | Gemini 3.1 Pro (long docs) / Claude Opus 4.7 (drafting) | 1M context for case files; cleaner legal prose per practitioner observation | | Finance and accounting | GPT-5.5 (spreadsheets) / Claude Opus 4.7 (modeling) | OSWorld lead per OpenAI release notes; FinanceAgent benchmark lead per Anthropic | | Scientific research / physics / chemistry / biology | Gemini 3.1 Pro | Top GPQA Diamond and HLE scores per Artificial Analysis | | Software engineering | Claude Opus 4.7 | Strongest publicly supported SWE-bench Verified score on swebench.com | | Marketing, content, SEO, and AEO | Claude Opus 4.7 (prose) / Gemini 3.1 Pro (audits) | Practitioner observation; long-context site audits | | Education | Claude Opus 4.7 | Clarity plus reportedly lower hallucination rate | | Creative and design | Claude Opus 4.7 (writing) / Gemini 3.1 Pro (video) | Practitioner observation; Video-MME lead per aggregators | | Customer service | Claude Sonnet 4.6 or Haiku 4.5 | Cost-efficient with adequate quality for most workloads | | Data analysis | GPT-5.5 (Python) / Claude Opus 4.7 (SQL) | Code Interpreter integration; coding lead per swebench.com | | Government and public sector | Claude or GPT on FedRAMP infra; DeepSeek V4 self-hosted | Compliance routing; data sovereignty | | Translation / multilingual | Qwen 3.6 Plus (Asian) / Gemini 3.1 Pro (broad) | Open-weight multilingual lead; broadest coverage per aggregators |

These are starting points, not prescriptions. Always run your own evaluation on your actual workflow before standardizing.

Key takeaways

- No model wins everything. Coding, reasoning, multimodal, computer use, and cost-efficiency are each led by different models in May 2026.

- Reasoning is statistically tied at the top. GPQA Diamond differences within ~0.5 points across Claude 4.7, Gemini 3.1 Pro, and GPT-5.5 are not meaningful.

- Open-weight has largely caught up on cost-efficiency, while the hardest reasoning, computer-use, and agentic benchmarks still favor closed frontier models. DeepSeek V4 is publicly positioned as near-frontier, but still behind the strongest closed frontier models on the hardest reasoning and agentic benchmarks.

- Vendor-reported numbers are not the same as independently verified numbers. Several "leadership" claims in 2026 marketing rest on vendor release notes, not primary leaderboards.

- The right model depends on the cost of being wrong. Match capability to task stakes; the cheapest model that clears the threshold is the right pick.

How to read this guide: confidence tags

Major model-selection claims in this article carry a confidence tag. The tags exist because "X model leads on Y" ranges from "verified on an independent leaderboard updated yesterday" to "the vendor said so in a release blog." Both get cited the same way in most AI guides. They shouldn't.

- [Primary] — Independent benchmark leaderboard, third-party verified. Most trustworthy. Examples: swebench.com, lmarena.ai, Scale AI SEAL.

- [Aggregator] — Multi-source aggregator (llm-stats.com, Artificial Analysis, BenchLM, Vellum) that pulls from primary sources and self-reported numbers, with methodology disclosed. Trustworthy if you check their methodology page.

- [Vendor] — From the model lab's own published materials (release blog, model card, technical report). Useful but optimized for marketing.

- [Judgment] — Practitioner consensus from developers working with the models in production. Useful, but not a measurement.

- [Insufficient] — Claim being made, but the public evidence is thin or contested. Read these as "this might be true; verify before betting on it."

Why there is no "best" AI model

There is the cheapest model that reliably clears the task you care about. Anyone selling you a one-line answer to "which AI should I use" is selling either a product or an opinion — usually both.

Every model has a capability ceiling and a price floor. For your specific task, you want the lowest price floor that clears your capability ceiling. Anything beyond that is wasted money.

That math changes by task. A customer service chatbot doesn't need PhD-level reasoning. A drug interaction checker probably does. A blog post draft is fine on a $0.30/M-token model. A legal contract analysis on the same model is malpractice. The model you pick for one job is almost never the right model for another.

Common myths about AI models, answered

Is GPT-5.5, Claude Opus 4.7, or Gemini 3.1 Pro the best AI model overall?

None is. Each leads on different benchmarks, and the benchmarks themselves measure different things [Aggregator]. Claude Opus 4.7 has the strongest currently visible SWE-bench Verified position with public support, while GPT-5.5's higher claimed score remains vendor-reported. GPT-5.5 ranks highest on the Artificial Analysis Intelligence Index. Gemini 3.1 Pro Preview leads Humanity's Last Exam on Artificial Analysis. Anyone telling you one of them dominates is either out of date or selling something.

Has open-source AI caught up to closed-source AI in 2026?

Partly. DeepSeek V4 (released April 24, 2026), Qwen 3.6, and Llama 4 Maverick are competitive on cost and on many benchmarks [Aggregator]. DeepSeek V4 is publicly positioned as near-frontier, but still behind the strongest closed frontier models on the hardest reasoning and agentic benchmarks [Vendor + Aggregator]. The Council on Foreign Relations' independent analysis reached a similar conclusion. "Caught up" is true if your task is moderate. "Caught up" is overstating it if your task is at the frontier.

Does a bigger context window mean better long-document recall?

No. Long-context performance degrades through the middle of the window — a phenomenon documented in multiple peer-reviewed papers as "lost in the middle" [Primary]. A model with a 1M-token context window often performs worse on facts buried at position 500K than on the same fact in a 50K-token prompt. For reliable recall over long documents, retrieval-augmented generation (RAG) usually beats dumping the whole document into context [Judgment].

Should I fine-tune a model to improve factual accuracy?

Usually not. Fine-tuning teaches a model to mimic a style or follow a format. For factual recall in a specific domain, RAG with a good retriever and a frontier model almost always outperforms fine-tuning a smaller model on the same data — and you can update the knowledge without retraining [Judgment].

Do bigger models always perform better?

No. Distilled and post-trained smaller variants sometimes outperform larger siblings on specific benchmarks [Aggregator]. Reasoning frameworks and post-training matter more than raw parameter count. The "biggest model wins" heuristic from 2023 is dead.

Do benchmark scores predict real-world performance?

Directionally yes, precisely no. Benchmark contamination is real — SWE-bench Verified shows ~30-point drops on the contamination-resistant Pro variant for the same models [Primary]. Vendors optimize specifically for the benchmarks that get cited in marketing. A 2-point benchmark difference is usually not detectable in real-world output. Use benchmarks to filter out the bottom half, not to pick between the top three.

Are free or cheap AI models good enough?

For many things, yes — including most low-stakes content generation, summarization, and routine code [Judgment]. They are not good enough for medical decisions, legal analysis, financial advice, or anything where a confidently wrong answer creates real liability. Match the cost of the model to the cost of being wrong.

Do I need an open-weight model for data privacy?

Not necessarily. Closed models accessed through compliant infrastructure (AWS Bedrock with HIPAA, Azure with FedRAMP, Google Cloud with sovereignty controls) can be more private than open-weight models running on a poorly secured local server. The license on the model isn't the same as the security of the deployment.

Will AI replace my profession?

In professional contexts, current AI tools augment expert workflows; they don't replace expert judgment [Judgment]. The right question isn't "will AI replace this job" — it's "which parts of this job will be done faster by an expert using AI than by an expert without AI." Almost all of them.

What is each AI model best at?

What is Claude Opus 4.7 best for?

Claude Opus 4.7 is the best-supported model for real-world coding, agentic tool orchestration, and long-horizon knowledge work as of May 2026. It's developed by Anthropic and priced at $5/$25 per million input/output tokens.

Where evidence is strongest:

- Real-world coding: 87.6% on SWE-bench Verified [Aggregator — swebench.com leaderboard, llm-stats]

- Hard coding: 64.3% on SWE-bench Pro per Anthropic's release materials [Vendor]

- Tool orchestration: 77.3% on MCP-Atlas [Vendor — Anthropic-published benchmark]

- Knowledge work (GDPVal-AA Elo) [Vendor]

- Lower hallucination rate than the OpenAI lineup according to Artificial Analysis evaluations [Aggregator]

- Vision resolution upgraded to 3.75 megapixels — useful for dense document and screenshot analysis [Vendor]

Where evidence is mixed or behind:

- Web research synthesis (BrowseComp dropped 4 points from 4.6 to 4.7 per Anthropic [Vendor]; GPT-5.5 reports higher [Vendor])

- Hardest reasoning benchmarks: Claude Opus 4.6 scored 34.4% on Scale AI's HLE leaderboard [Primary]; 4.7 not yet listed

What is GPT-5.5 best for?

GPT-5.5 is the best-supported model for computer use, terminal automation, web research synthesis, and one-tool-does-everything workflows. Developed by OpenAI and priced at $5/$30 per million input/output tokens.

Where evidence is strongest:

- Composite intelligence: tops the Artificial Analysis Intelligence Index [Aggregator]

- Computer-use tasks: 78.7% on OSWorld-Verified per OpenAI release materials [Vendor], surfaced through Vellum [Aggregator]

- Web research: 89.3% on BrowseComp Pro variant [Vendor]

- Hardest exam: 44.3% on HLE per Artificial Analysis [Aggregator]

- Native integration with the broader OpenAI tool stack — Code Interpreter, image generation, voice — makes it the strongest single-model choice for users who want one tool to do everything [Judgment]

Where evidence is mixed or behind:

- Real-world coding: 88.7% on SWE-bench Verified per OpenAI release notes [Vendor]; not yet on the swebench.com primary leaderboard, where Claude 4.7 holds 87.6% [Primary]. The "GPT-5.5 leads coding" framing is currently vendor-reported [Insufficient — treat the lead as unverified]

What is Gemini 3.1 Pro best for?

Gemini 3.1 Pro is the best-supported model for scientific reasoning, multimodal tasks, and large-document analysis as of May 2026. Developed by Google and priced at $2.50/$15 per million input/output tokens — the best price-to-capability ratio of the three frontier closed models.

Where evidence is strongest:

- Scientific reasoning: 94.3% on GPQA Diamond [Aggregator — llm-stats, Artificial Analysis]

- Hardest exam: 44.7% on HLE per Artificial Analysis evaluations [Aggregator]

- Abstract reasoning: 77.1% on ARC-AGI-2 [Vendor — Google-reported]

- Multimodal tasks: leads Video-MME and most large-document benchmarks [Aggregator]

- Long context: 1M-token context (2M variant available on some access tiers) [Vendor]

Where evidence is mixed or behind:

- Agentic coding: 80.6% on SWE-bench Verified [Aggregator] — competitive but not frontier-leading

- Computer use: no published OSWorld-Verified score [Insufficient]

- Tool use (MCP-Atlas): 73.9% per Anthropic comparison table [Vendor — competitor's benchmark, treat with caution]

Note on top GPQA scores: Differences within ~0.5 points across Claude 4.7 (94.2%), Gemini 3.1 Pro (94.3%), and GPT-5.5 (~94.4%) are statistically indistinguishable. Calling any of them "the leader" overstates the precision of the data.

What is Grok 4 best for?

Grok 4 is most distinctive for real-time X (Twitter) data integration. Developed by xAI and priced at approximately $3/$15 per million tokens [Vendor — varies by tier].

Where evidence is strongest:

- Real-time X (Twitter) data integration — distinctive capability [Vendor]

- Grok 4.20 holds top-10 position on LMArena [Primary]

Where evidence is mixed or behind:

- xAI submits to fewer public leaderboards than competitors, which makes independent verification harder [Judgment]

- HLE: 24.5% on Scale AI's leaderboard [Primary] — well behind frontier

- xAI's "Grok 4 Heavy 44.4% HLE" claim was not submitted to the official leaderboard for verification [Insufficient]

What is DeepSeek V4 best for?

DeepSeek V4 is the best-supported open-weight model for cost-sensitive deployments at scale, and the price-performance leader as of May 2026. Released April 24, 2026 in preview by DeepSeek AI under MIT license. Two production variants: V4 Pro (1.6T total / 49B active parameters) and V4 Flash (284B total / 13B active).

Where evidence is strongest:

- 1M-token context window for both variants [Vendor]

- Pricing approximately 1/7th to 1/35th the cost of Claude Opus 4.7 on equivalent workloads [Aggregator]

- Coding: 80.6% on SWE-bench Verified per llm-stats [Aggregator]; LiveCodeBench scores rival GPT-5.5 [Aggregator]

- The "best available open-weight model" position by most current measures [Aggregator — consensus across multiple aggregators]

- MIT license — fully permissive for commercial use [Primary — license terms]

Where evidence is mixed or behind:

- DeepSeek's own technical report acknowledges V4 reasoning and agentic capabilities are "comparable to GPT-5.2, Gemini 3.0 Pro, and Claude Opus 4.5" — i.e., one generation behind current frontier [Vendor — DeepSeek's own statement]

- HLE: 37.7% per HuggingFace evaluation [Primary] — behind Gemini 3.1 Pro and GPT-5.5

- Multi-turn agent loops: known integration issues with several IDEs as of early May 2026 [Judgment]

What is Qwen 3.6 Plus best for?

Qwen 3.6 Plus is one of the best-supported open-weight fits for Chinese-language and Asian-language workflows, with stronger proof needed before calling it the outright leader. Developed by Alibaba Cloud / Qwen Team under Apache 2.0 license — genuinely permissive for commercial use.

Where evidence is strongest:

- Strongest open-weight performance on Chinese-language tasks and Asian-language workflows [Vendor + Judgment]

- Math: 92.3% on AIME 2025 [Aggregator]

- GPQA Diamond: 90.4% [Aggregator]

- Apache 2.0 license [Primary — HuggingFace license terms]

Where evidence is mixed or behind:

- Some Qwen 3.6 Max-Preview claims (e.g., #1 on SWE-bench Pro) are self-reported and not yet independently verified [Insufficient]

- Less English-language production tooling than DeepSeek V4 [Judgment]

What is Llama 4 Maverick best for?

Llama 4 Maverick is most useful for cost-sensitive deployments at very high volume, and for workloads needing extremely large context windows (Scout variant supports 10M tokens). Developed by Meta under the Llama license, which is open-weight but not OSI-compliant open source.

Where evidence is strongest:

- Largest practical context option in the open-weight tier — the Scout variant supports 10M tokens [Vendor]

- Native multimodal support [Vendor]

- Cost-sensitive deployments at very high volume where self-hosting economics dominate [Judgment]

Where evidence is mixed or behind:

- Not in the top 50 on most current public reasoning leaderboards [Aggregator]

- GPQA Diamond: older publications suggest ~73-80% range [Aggregator] — Meta hasn't refreshed Llama 4 numbers against current frontier benchmarks [Judgment]

- License is open-weight but restricted: 700M monthly-active-user cap means Meta retains licensing rights for the largest deployments. Not OSI-compliant open source [Primary — Meta license terms]

Best AI model by industry in 2026

The framing here is task-fit, not authority. The question isn't "what should a hospital use" — it's "given the constraints in healthcare, what's the best-supported current fit per public evidence, and where should you verify before deploying."

Best AI for healthcare and medicine

For most healthcare workflows, Claude Opus 4.7 is the best-supported current fit because Artificial Analysis reports it as having one of the lowest hallucination rates among frontier models [Aggregator]. For medical imaging, Gemini 3.1 Pro's multimodal performance is stronger.

Constraints that matter: HIPAA compliance, hallucination resistance, source citations, regulatory liability.

Best-supported current fits:

- For clinical note summarization and patient communication: Claude Opus 4.7 [Aggregator]

- For medical imaging interpretation: Gemini 3.1 Pro [Aggregator]

- For literature review across thousands of papers: Gemini 3.1 Pro's 1M-2M context window enables single-pass ingestion [Vendor]

- For diagnostic reasoning: GPT-5.5 and Claude Opus 4.7 score within margin of error on relevant reasoning benchmarks [Aggregator]

Constraints to verify before deployment:

- Route through HIPAA-compliant infrastructure (AWS Bedrock with BAA, Google Cloud Vertex AI with HIPAA controls). Consumer-tier API access is not HIPAA-compliant by default.

- For complete data sovereignty (research hospitals, military medicine, proprietary clinical trials), self-hosted DeepSeek V4 or Llama 4 Maverick are the defensible options.

- No model should be used for clinical decisions without expert review.

Best AI for legal work

For long-document legal work like contract review and discovery, Gemini 3.1 Pro is the best-supported current fit because of its 1M-token context window. For drafting and case law research, Claude Opus 4.7 produces cleaner legal prose.

Constraints that matter: precision, citation reliability, large-document handling, confidentiality.

Best-supported current fits:

- For contract review and discovery: Gemini 3.1 Pro's long context window enables full case files in a single pass [Vendor]

- For case law research and brief drafting: Claude Opus 4.7's prose quality and lower hallucination rate [Judgment]

- For litigation strategy and complex reasoning: GPT-5.5 and Claude Opus 4.7 are roughly tied [Aggregator]

Constraints to verify before deployment:

- Verify every citation manually regardless of model. Hallucinated case citations remain a real failure mode across all frontier models [Judgment].

- Route through enterprise infrastructure with explicit data-isolation guarantees for client work.

Best AI for finance and accounting

For spreadsheet automation and Excel-native workflows, GPT-5.5 is the best-supported current fit because of its computer-use scores and OpenAI tool stack integration. For financial modeling, Claude Opus 4.7 leads.

Constraints that matter: numerical precision, structured output, spreadsheet operability, audit trails.

Best-supported current fits:

- For spreadsheet automation and Excel-native workflows: GPT-5.5's computer-use scores (78.7% OSWorld) [Vendor]

- For financial modeling and analytical reasoning: Claude Opus 4.7 leads the FinanceAgent benchmark [Vendor — Anthropic-published, verify before relying]

- For market research synthesis: GPT-5.5's BrowseComp lead [Vendor]

- For document-heavy due diligence: Gemini 3.1 Pro's long context [Vendor]

Constraints to verify before deployment:

- Expert review on every output for regulated financial advice

- Match audit-trail requirements to deployment infrastructure, not model selection

Best AI for scientific research, physics, chemistry, and biology

For graduate-level scientific reasoning, Gemini 3.1 Pro is the best-supported current fit because it scores highest on GPQA Diamond and HLE on Artificial Analysis [Aggregator]. For mathematical proof verification, GPT-5.5 leads.

Constraints that matter: correctness on hard reasoning, math accuracy, specialized domain knowledge, multimodal capability for figures and diagrams.

Best-supported current fits:

- For graduate-level scientific reasoning: Gemini 3.1 Pro scores highest on GPQA Diamond (94.3%) and HLE (44.7%) [Aggregator]

- For mathematical proof verification and FrontierMath-style problems: GPT-5.5 [Vendor]

- For literature synthesis across thousands of papers: Gemini 3.1 Pro's long context [Vendor]

- For interpreting figures, charts, and scientific diagrams: Claude Opus 4.7 (post-vision upgrade) and Gemini 3.1 Pro both strong [Aggregator]

Reality check: Frontier scores on HLE are ~45%; expert humans have been reported to score substantially higher on Humanity's Last Exam material per Scale AI's published leaderboard context [Primary]. Use AI for literature synthesis, hypothesis generation, and code; use expert review for any conclusion that gets published.

Best AI for software engineering

For real-world bug fixing and production coding agents, Claude Opus 4.7 is the best-supported current fit because it holds the strongest publicly verified position on SWE-bench Verified at 87.6% [Aggregator]. For terminal automation, GPT-5.5 leads.

Constraints that matter: code quality, multi-file coherence, tool integration, terminal proficiency.

Best-supported current fits:

- For real-world bug fixing in production codebases: Claude Opus 4.7 [Aggregator]; Claude remains widely favored in coding-agent workflows including Cursor and Cognition (Devin), but this reflects practitioner and market observation rather than benchmark proof [Judgment]

- For terminal-based automation and shell-heavy ops: GPT-5.5 leads Terminal-Bench 2.0 at 82.7% [Vendor]

- For massive codebase ingestion and cross-repo analysis: Gemini 3.1 Pro's context window [Vendor]

- For cost-sensitive coding (CI tools, batch refactoring): DeepSeek V4 Pro at a fraction of the price clears most production tasks [Aggregator + Judgment]

Constraints to verify before deployment:

- The reported "GPT-5.5 leads SWE-V at 88.7%" number is OpenAI release-note material, not on the swebench.com primary leaderboard [Insufficient]. Treat coding leadership as a tie at the top for now.

Best AI for marketing, content, SEO, and AEO

For long-form content with consistent voice, Claude Opus 4.7 is the best-supported current fit per practitioner consensus [Judgment]. For sitewide SEO and AEO audits, Gemini 3.1 Pro's long context wins.

Constraints that matter: prose quality, factual reliability, brand voice consistency, source citation for AI-search visibility.

Best-supported current fits:

- For long-form content (articles, white papers): Claude Opus 4.7 [Judgment]

- For structured marketing content (landing pages, schema markup): GPT-5.5 follows formatting instructions reliably [Judgment]

- For SEO and AEO content audits across large sites: Gemini 3.1 Pro's long context enables sitewide entity and citation analysis [Vendor]

Constraints to verify before deployment:

- For AEO specifically, post-generation verification matters more than the generation model: explicit fact-checking, citation auditing, and entity consistency checks. All frontier models hallucinate in subtle ways that hurt AI search citations downstream [Judgment].

Best AI for education

For tutoring and explanation generation, Claude Opus 4.7 is the best-supported current fit because it combines clarity with reportedly lower hallucination rates than other frontier models per Artificial Analysis [Aggregator]. For multimodal educational content, Gemini 3.1 Pro is stronger.

Constraints that matter: clear explanation, age-appropriate framing, factual accuracy, low hallucination rate.

Best-supported current fits:

- For tutoring and explanation generation: Claude Opus 4.7 [Judgment + Aggregator]

- For multimodal educational content: Gemini 3.1 Pro [Aggregator]

- For non-English educational content: Qwen 3.6 Plus on Chinese and most Asian languages [Vendor + Judgment]

- For automated grading at scale: mid-tier models (Claude Sonnet 4.6, GPT-5 mini) clear the threshold at lower cost [Judgment]

Best AI for creative work and design

For copywriting and screenwriting, Claude Opus 4.7 is the practitioner consensus [Judgment]. For video understanding and editing, Gemini 3.1 Pro leads Video-MME by a wide margin.

Constraints that matter: voice, originality, consistency, multimodal generation.

Best-supported current fits:

- For copywriting and screenwriting: Claude Opus 4.7 [Judgment]

- For brainstorming and structured creative frameworks: GPT-5.5 [Judgment]

- For image generation: GPT-5.5 (integrated DALL-E successor) and Gemini's image gen are competitive [Aggregator]; specialized models (Midjourney, Flux) often outperform general models for specific aesthetic targets [Judgment]

- For video understanding: Gemini 3.1 Pro [Aggregator]

Best AI for customer service and operations

For high-volume chatbot deployments, Claude Sonnet 4.6 or Haiku 4.5 are the best-supported current fits because they clear the "good enough" threshold at a fraction of frontier cost [Judgment].

Constraints that matter: cost per interaction, latency, consistency, escalation handling.

Best-supported current fits:

- For high-volume chatbot deployments: Claude Sonnet 4.6 or Haiku 4.5 [Judgment]; GPT-5 mini is similarly suited

- For self-hosted customer service: DeepSeek V4 Flash is the cost leader [Aggregator]

Reality check: Most high-volume customer-service workloads should not default to frontier models unless escalation quality justifies the cost. Cost-per-conversation math typically doesn't work, and frontier models tend to over-think simple queries [Judgment].

Best AI for data analysis

For Python/pandas-heavy analytical workflows, GPT-5.5 is the best-supported current fit because of Code Interpreter integration [Judgment]. For SQL generation, Claude Opus 4.7's coding lead applies.

Constraints that matter: code generation accuracy, statistical reasoning, large dataset handling.

Best-supported current fits:

- For SQL generation and exploratory analysis: Claude Opus 4.7 [Aggregator]

- For Python/pandas workflows with Code Interpreter integration: GPT-5.5 [Judgment]

- For very large datasets exceeding normal context windows: Gemini 3.1 Pro's long context [Vendor]

Best AI for government and public sector

For US federal workloads, route Claude or GPT through FedRAMP-authorized infrastructure. For state and local government with strict data residency, self-hosted DeepSeek V4 or Llama 4 Maverick are the defensible choices.

Constraints that matter: data sovereignty, auditability, compliance with regional regulations.

Best-supported current fits:

- US federal: Claude or GPT through FedRAMP-authorized infrastructure (AWS GovCloud, Azure Government) [Vendor]

- State and local with strict residency: self-hosted DeepSeek V4 or Llama 4 Maverick [Vendor]

- EU public sector under GDPR and AI Act: explicitly EU-resident options like Mistral and Aleph Alpha worth evaluating [Judgment]

Best AI for translation and multilingual content

For Chinese and Asian-language production, Qwen 3.6 Plus is the best-supported open-weight fit [Vendor + Judgment]. For broad multilingual coverage, Gemini 3.1 Pro wins.

Constraints that matter: language coverage, cultural appropriateness, technical terminology handling.

Best-supported current fits:

- For Chinese-language production: Qwen 3.6 Plus [Vendor + Judgment]

- For broad multilingual coverage including European, Asian, and major African languages: Gemini 3.1 Pro [Aggregator]

- For high-stakes translation (legal, medical): pair any of the above with explicit verification workflows [Judgment]

How to choose the right AI model: a 5-step framework

Step 1: Identify the cost of being wrong. A blog post draft with a typo costs nothing. A medical recommendation costs everything. The cost of being wrong determines how much capability you actually need.

Step 2: Match capability to that cost. If the cost of being wrong is high, you want a frontier model with verification workflows. If the cost is low, you want the cheapest model that produces output above your quality bar.

Step 3: Check the constraint stack. Data sovereignty? Self-hosted open weights. Regulatory compliance? Closed models on compliant infrastructure. Very high volume? Cost-per-token math determines the answer regardless of capability differences.

Step 4: Run a real test. Benchmark differences of less than 3 points are rarely visible in practice. Benchmark differences in the same task category of more than 5 points usually are. Test the top two candidates on 20 actual tasks from your real workflow before standardizing.

Step 5: Plan for routing, not picking. More mature AI deployments increasingly avoid picking a single model. They route different requests to different models based on task type, complexity, and cost. A customer service agent might use Haiku for routine queries, escalate to Sonnet for complex ones, and route legal/financial questions to Claude Opus 4.7 with mandatory expert review.

Frequently asked questions

What is the best AI model in 2026?

There is no single best AI model in 2026. Each leads on different benchmarks: Claude Opus 4.7 leads SWE-bench Verified for coding [Aggregator], Gemini 3.1 Pro leads GPQA Diamond and HLE for scientific reasoning [Aggregator], GPT-5.5 leads OSWorld for computer use [Vendor], and DeepSeek V4 leads price-performance in the open-weight tier [Aggregator]. The right choice depends on your task, budget, and constraints.

Is Claude better than ChatGPT for coding?

For real-world coding on production codebases, Claude Opus 4.7 holds the strongest publicly verified position on SWE-bench Verified at 87.6% [Aggregator — swebench.com]. OpenAI's GPT-5.5 release notes claim 88.7% but that number has not been verified on the official leaderboard [Insufficient]. Claude remains widely favored in coding-agent workflows including Cursor and Cognition (Devin), but this reflects practitioner and market observation rather than benchmark proof [Judgment]. For terminal automation specifically, GPT-5.5 leads Terminal-Bench 2.0 [Vendor].

Should I use ChatGPT or Claude for medical work?

Both have a role. Artificial Analysis reports Claude Opus 4.7 as having one of the lowest hallucination rates among frontier models [Aggregator], making it a safer default for clinical note summarization and patient communication. GPT-5.5 is competitive on diagnostic reasoning. Neither should be used for clinical decisions without expert review, and both must be deployed through HIPAA-compliant infrastructure for any patient data.

What's the cheapest AI model with frontier-level performance?

DeepSeek V4 Pro, released April 24, 2026 in preview, is the cheapest model with near-frontier performance — approximately 1/7th to 1/35th the cost of Claude Opus 4.7 on equivalent workloads [Aggregator]. DeepSeek's own technical report acknowledges V4 trails the closed frontier by 3-6 months [Vendor]. For tasks where that gap doesn't matter, V4 is the price-performance winner.

Is DeepSeek V4 actually as good as Claude Opus 4.7 or GPT-5.5?

On many benchmarks, yes — DeepSeek V4 Pro scores 80.6% on SWE-bench Verified [Aggregator] and is competitive on coding and math. On the hardest reasoning benchmarks (HLE, FrontierMath) and on agentic computer use, the gap to closed frontier models is real and acknowledged by DeepSeek itself [Vendor]. For moderate tasks at scale, V4 is excellent. For frontier reasoning, it's not yet equivalent.

Is open-source AI as good as closed-source AI?

It depends on the task. For moderate workloads — most coding, content generation, summarization, customer service — open-weight models like DeepSeek V4 and Qwen 3.6 are now competitive [Aggregator]. For frontier reasoning tasks, the hardest exam benchmarks, and computer use, closed models still lead [Aggregator]. The gap is compressing month over month, but it has not closed.

What AI model has the largest context window?

Llama 4 Scout has the largest published context window at 10M tokens [Vendor], though long-context performance degrades through the middle of the window across all models. Among models with proven long-context performance, Gemini 3.1 Pro at 1M-2M tokens is the most reliable choice for large-document workflows [Vendor]. DeepSeek V4 Pro and V4 Flash both support 1M tokens [Vendor].

Which AI model hallucinates the least?

Artificial Analysis reports Claude Opus 4.7 as having one of the lowest hallucination rates among frontier models [Aggregator]. All frontier models hallucinate at meaningful rates on factual recall — verification workflows are essential regardless of model choice. For high-stakes domains (medical, legal, scientific), pair any model with explicit fact-checking and citation auditing [Judgment].

What's the difference between SWE-bench Verified and SWE-bench Pro?

SWE-bench Verified is a human-validated subset of 500 GitHub issues; it's the standard coding benchmark cited in most marketing. SWE-bench Pro is the contamination-resistant variant with multi-language tasks. The same models drop ~30 points on Pro versus Verified — a strong signal that Verified scores are partially inflated by training-data contamination [Primary]. When comparing models, Pro scores are more reliable.

How often do AI benchmark leaderboards change?

Frequently. SWE-bench Verified and HLE leaderboards update with each major model release. LMArena Elo scores update continuously based on user votes. Aggregator sites like llm-stats.com and Artificial Analysis refresh weekly or daily. Any "best model" article published more than 60 days ago is likely out of date. Always check the publication date.

Glossary: AI benchmarks explained

SWE-bench Verified — A human-validated subset of 500 GitHub issues testing whether models can resolve real-world coding bugs. Standard coding benchmark; known contamination concerns.

SWE-bench Pro — The harder, contamination-resistant variant of SWE-bench. Multi-language tasks. Same models score ~30 points lower than on Verified.

GPQA Diamond — 448 graduate-level science questions in biology, physics, and chemistry. Tests reasoning beyond memorization. PhD experts score ~65%.

AIME 2025 — American Invitational Mathematics Examination 2025 problems. Tests olympiad-level math reasoning. Now saturated at the frontier (multiple models at 100%).

HLE (Humanity's Last Exam) — 2,500 expert-level questions across mathematics, humanities, and natural sciences. Designed to be unsaturatable. Frontier models currently score around 45% on Artificial Analysis; expert performance on the material is reported to be substantially higher per Scale AI's published leaderboard context.

OSWorld-Verified — Benchmark for desktop computer-use tasks. Models operate real software interfaces (Ubuntu, Windows, macOS) and complete multi-step workflows. Human expert baseline ~72%.

MCP-Atlas — Anthropic-published benchmark for Model Context Protocol tool use. Tests how reliably models orchestrate external tools.

LMArena — Crowdsourced human preference benchmark (formerly LMSYS Chatbot Arena). Users blind-vote between two model responses. Produces Elo ratings.

ARC-AGI-2 — Abstract Reasoning Corpus for Artificial General Intelligence, version 2. Tests genuine novel reasoning rather than pattern matching. Designed to be resistant to training contamination.

BrowseComp — Web research and synthesis benchmark. Tests how reliably models pull and synthesize information across multiple web pages.

Terminal-Bench 2.0 — Terminal/command-line agentic task benchmark. Tests shell automation and multi-step terminal workflows.

FrontierMath — Hard mathematical reasoning benchmark across multiple difficulty tiers. Designed to remain hard for frontier models.

Artificial Analysis Intelligence Index — Composite score across multiple benchmarks. Useful as a top-line summary; verify the underlying components for any specific use case.

Sources and methodology

This guide synthesizes data from:

- Primary leaderboards: swebench.com, lmarena.ai, Scale AI SEAL, agi.safe.ai (HLE)

- Aggregators: llm-stats.com, Artificial Analysis, BenchLM.ai, Vellum

- Vendor documentation: Anthropic, OpenAI, Google, xAI, DeepSeek, Alibaba/Qwen, Meta release blogs and technical reports

- Independent analysis: Council on Foreign Relations DeepSeek V4 analysis (April 2026), MIT Technology Review

Confidence tags ([Primary] / [Aggregator] / [Vendor] / [Judgment] / [Insufficient]) indicate the strength of underlying proof for each claim. Verified May 1, 2026.

The AI landscape changes monthly. Treat specific benchmark numbers as snapshots and the underlying selection framework as durable. For the latest benchmark snapshot, see the companion comparison chart as follows: !ai model benchmarks

Have a correction or pushback on a specific claim? Email corrections welcome — that's the point of confidence tags. Disagreement should be about evidence, not vibes.